One of the joys of having access to a client's Analytics data is finding out what does and does not drive traffic and sales. I'm specifically interested in measuring the dynamic elements of a page to see what kind of impact they have. Product reviews created by customers falls into this category. I recently ran a measurement test to attempt to find the value of customer created reviews.

Measuring Reviews by Score

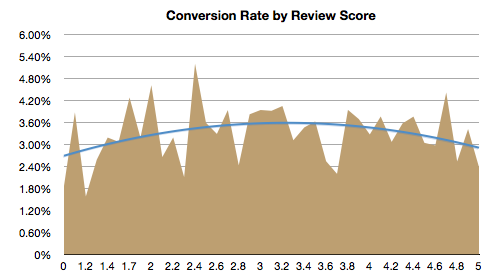

I tracked the raw average reviews for all product pages, with the averages ranging from zero to five. I then measured the conversion rate of those pages. These numbers take nothing else into account -- not the price of a product, not how much a product is promoted via the homepage, not anything -- so the analysis is meant to truly measure how much impact reviews have.

There are a few interesting things to take note of in the graph. The first and most obvious is that the blue trend line is not linear, it curves upwards towards what we consider the average ratings: 2.9 to 3.4, then falls back down as it approaches 5. I think that trust has a lot to do with these numbers. To a customer, a product with several reviews that have an average 3.2 rating is worth more than a lone 5 star review (most 5 star reviews have only one review).

Additionally, having at least one review appears to play a significant role in whether or not to make a purchase. The difference is the conversion curve is dramatic. The conversion rate ranges from 2.71% to 3.60%, a difference of 34%.

Notice that the products with an average of 1 star (which means that 100% of that product's reviews were 1 star) have a conversion rate of 3.88%, while products with no ratings have only a 1.87% conversion rate. That's a difference of 107%.

I admit to being surprised to learn that a product with a 1 star review has more than twice as much chance to sell as a product with no reviews.

Measuring Reviews by Review Quantity

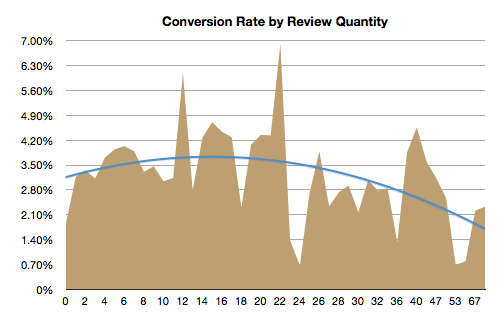

Another interesting measurement taken at the same time was the number of reviews a product has, and trying to determine it's effect on conversion rate.

It's interesting to see the general curve upwards at first, but then to see it plummet the more reviews are written. The difference between 22 reviews (6.9%) and 23 reviews (1.38%) is startling. We've started referring to 23 reviews as the "Valley of Despair". It seems that customers are most likely to write a review when they have something negative to say, and when they say something negative they say it a lot.

Overall though, this was an eye opening measurement, and has lead to an overhaul in the reviews process for this client. In the future we'd like to measure what happens when a zero review product earns its first review, to better rule out any popularity issues that may be skewing the numbers.