If you have a website then it is a good idea to keep a track of your search engine positions for key phrases that people search for and that bring you traffic. For any SEO professionals this is especially true.

Some people use commercial products for this task, others use online services, but there is a free way to do it. Well, free if you already own a copy of Microsoft Excel, that is!

Before we get into the solution, my apologies to the guys at Webmaster World for singling you out. That particular website ranks nicely in all the test terms, so it became useful to use as an example!

How to Use the Spreadsheet

- If you want to use this spreadsheet, first download it from here and open it up in Excel.

- Write your website's host name in cell B2.

- Enter your search terms in cells B4, C4, D4, and so on (as many as you like).

- Select the example rows 5-12 and press Del to clear the contents (If you use the right-click, delete approach the chart will become smaller, so resize it back).

- Press Ctrl-Shift-U to refresh the results.

All being well, a new line with rankings will be added, and the graph will be updated. Just like magic! (Note: If the macro is run again during the same day, it will not do anything.)

About the Solution

For this solution we used the default-sized result pages (10 results each) and not a 100-result page, because of Google's double-listing mechanism that puts results from the same site together. Your competitors who appear above you in the SERPs might get a double-listing in a larger page, and thus skew the rankings. We want to get the rankings as they are seen by real searchers.

The HTTP User agent is IE7. This is done because the default WinHTTP user agent string is blocked by Google. They really don't want bots to use their service, and rightfully so. This is also a good point to say, use this at your own risk, heh.

We used a configurable parameter, GOOGLE_WEBSERVER so you can choose which Google server to fetch the data from. This is useful if you are tracking rankings from a different region than your potential customers. For example, I am based in the UK but a lot of my customers are in the USA, so I need to see results from outside of my own data center. You can use a localized server like www.google.co.uk, or the Google Caffeine test server or any other specific one by IP.

Finally, to keep things fast and simple, the macro only looks at the first 3 result pages. This is an artificial limit that can be lifted by changing the call to GetCurrentRanking. We placed this limit to shorten the possible time it takes to run the macro. If your website is not found in the results, 0 will be displayed.

How it Works

If you look at the macro code, there is a fair amount going on under the hood, but it is fairly straight forward.

Essentially what we are doing here is scraping the search result then looking within the returned content for specific strings (our links). This approach can be used for a lot of useful purposes so it is worth investigating.

The Macro Code

The main macro subroutine AddCurrentRankingsRow first retrieves the website URL, and locates the data in the sheet. Then it adds a new line for today's date, and works on the term columns:

term = sheet.UsedRange.Cells(4, col).Text rank = GetCurrentRanking(term, myurl, 3) sheet.Cells(newRow, col).Formula = rank

For each term column, the subroutine fetches the term itself, then looks at the SERPs (Search Engine Result Pages) to find the rankings of our website, and finally writes it to the respective cell. The subroutine GetCurrentRanking figures out the ranking by iterating the SERPs as long as our website does not appear in the results. When our website appears in the results, it calculates and returns the ranking:

While pagenum < maxpages

pagenum = pagenum + 1

url = BuildSERPURL(term, start)

page = FetchPage(url)

If FindRank(page, myurl, count) Then

GetCurrentRanking = start + count

Exit Function

End If

start = start + count

Wend

GetCurrentRanking uses three handy but simple utility functions:

- BuildSERPURL - This generates the URL of a SERP for a specific term, starting at a certain result number.

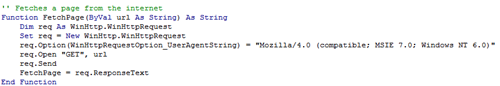

- FetchPage - Uses Microsoft's WinHttp library to do a HTTP GET request and fetch the SERP's HTML contents.

- FindRank - Finds the position of our website in the organic results in a page.

FindRank is specific to Google results. It disregards the paid advertisements and counts result links. The organic result links are in the form ...

<h3 class="r"><a href="URL">...

... so the function just extracts the URL from those links. This function can be easily adapted to other search engines like Bing or Ask.com, but it will require some programming tweaks to work.

How to Run the Macro Automatically

You may want to run the macro automatically, without the need to press Ctrl-Shift-U. In order to do so, add the following subroutine after all the code:

Private Sub Workbook_Open()

AddCurrentRankingsRow

End Sub

This will run the macro every time you open the file, which means you will always see the most updated data.

Summary

This project serves as a good example for how you can use Excel to scrape search results and monitor your rankings.

I am sure you can think of other ways this could be used, perhaps as part of your link building or reputation management efforts?

Please share your thoughts and ideas in the comments ...

P.S.

- I've updated the article with slight changes that are required for Google's new search results page.

- The file is now in the old XLS format instead of XLSX.

- URL Encoding is done by WinHTTP now.