- Keynote speech on Wolfram Alphas computational engine

- SEO Ranking Factors in 2010

- Leveraging Digital Assets for Maximum SEO Impact

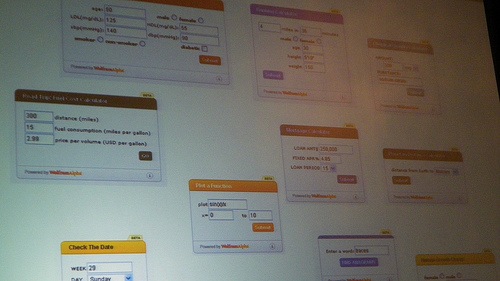

I thoroughly enjoyed Barak Berkowitz's keynote speech on the Wolfram Alpha computational engine. It helped me widen my knowledge about this engine, its mission and the specific niche services it provides.

First, lets emphasize that Wolphram Alpha is not a search engine, as Barack clearly pointed out during the keynote, but a computational knowledge engine, which can helps you find sources of information.

In my opinion, it was appropriate to bring this keynote in to SMX London as, in a way, I see WA as search engine but lets first see other comments and Barack Bs own words about WA.

Barack B. showcases Wolphram Alpha's computational functionality through two initial examples :

1. a graphically illustrated example of detailed weather forecast in London

2. comparing gross domestic product between Japan and China via charts and graphs showing the differences or similar patterns between the two countries

3. And a third one (am I drunk) to help all SEOs work out how drunk theyll be after the post-event LondonSEO free beer event on Tuesday evening. Barack B ; suggests that he might have been drunk when he signed up for a managing director role with WA, and uses such statement to put the data into perspective.

From this point on, I understood that the whole idea of this keynote was to raise the profile of Wolphram Alpha as a computational engine by showing off its mathematical/computational capabilities, so I relaxed and quite enjoyed the session despite the lack of SEO perspective .

Some of WA features & aims of WA are :

It computes the answer to your questions using its built-in algorithms and a collection of data.

It goes beyond facts and figures. Instead it processes, computes and contrast data on the fly

WA handles about two trillions of data in its system. The data is managed by data curators. They are the ones responsible for normalizing the data. Eg: gold data for Japan is not the same as China.

To expand to be able to offer WAs functionality more widely to concerned developers. There is an iphone and ipad application and there is even an API.

Widgets : They will launch Widgets on different types of data: check the date, plot a function, human growth. widgets will allow people with expertise in diff areas to embed them and get easy access to regular information.

WA aims to 'democratize knowledge and so change the world' (new slide)

To Make all the Factual Knowledge of mankind available to all people. Google, as opposed only gathers, indexes and organizes information which is a completely different mission.

Part of the core engine for WA is to take various bits of information that fall into certain criteria and turn it into charts.

Barack B encourages to think about WA like a FACT engine, which will change the web forever.

Barack B says that there will always be something difficult to predict : how people will use the technology.

Q&A

MikkeldeMib asked how Wolphram Alpha is going to deal with the legal aspects of scrapping the data from various different sources in order to make the computational calculations needed to return the results. Mikkel added that in Denmark for example is not quite legal to always get the data you need from public sources and use it for your own means.

Barack B. replied saying that the data picked from US govern sources is free to be scrapped.... they always make sure that the data is picked on safe grounds and in compliance with the local laws, but they are also prepare to buy data if they have to.

Richard Baxter asked how to share data with you with WA and what criteria needs to be met in order to use their data. Answer: in a future, data owners will be able to send their datasets to WA via spreadsheets. Such system is not yet in place.

Jaamit asked if there was a potential problem that Google or any of the major search engines might want to compute and deliver data along the same lines as WA. Barack B did not seem concerned about this and clarified that there is actually no threat there but an opportunity for partnership.

He stated that Googles only task, as a search engine, is in indexing pages, simply finding information and returning information. WA does a bit more than that by computing the results.

Another question, kind of expected was about how WA sustains itself financially and whether they are looking at revenue-making models. They answer was yes as they are contemplating several options to monetize the venture, including placing advertisers ads on the search results.

Q : what is the key target market fo WA right now? This is not defined at the moment as it could potentially be any information-hungry consumer. They want to focus their marketing on a broader range of people, not just specific segments of dedicated datasets handlers.

Q : are they planning to target other markets and languages? Yes they do, but it is not easy, it is a challenge that they are prepared to take. German will most definitely be the first language they will look to incorporate as they are the second largest users of their product.

Conclusion

Personally I still see WA as an actual search engine because despite the unique and specific ability to compute the data and deliver it in a graphical and orderly format, the WA engine is used to search and find. This conference is about Search and not just SEO, so this keynote had my thumbs up and a rate of 6 in a scale 0 to 10 taking into account several criteria : interesting, informative, takeaway, etc

I attended all 4 sessions from the Advanced Organic Track:

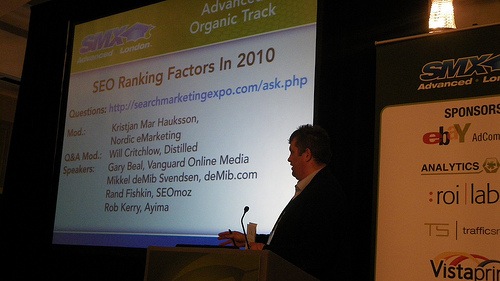

SEO RANKING FACTORS IN 2010

Introduced by Kristjan Mar Hauksson, Rand Fishkin kicks off the session with the kind of slides everyone expects: cool graphs and charts to support his views on the current SEO ranking factors.

Rand Fishkin, from SEOMoz kicked his presentation mentioning the 'reasonable surfer model' theory from Bill Slawski (SEO by the SEA) and recommending the article to the audience. In this article Bill states that some important things to look out for in links are font size and anchor text.

The main takeaways of Rands presentation were :

1. Links in content are of considerably more valuable than stand-alone links. This one makes all the sense of the world to me.

2. Link position matters in that links nearer the top of the page carry slightly more weight than the other links at lower positions. He supports this view with tests carried out.

3. Twitter links: links from: does Twitter data influence the SERPS? Twitter links definitely influence the rankings if the Query Deserves Freshness (QDF) algorithm is triggered. Rand thinks that this is so since Bing&Google have made deals with Twitter

4. Rand thinks that Twitter is indeed cannibalizing the link graphs.

5. Facebook: Does Facebook social graph have enough adoption to be useful? is the data from like buttons and social plugins flowing to Google?

6. As Bing has shown an interest in Facebook, could this be Bing's secret weapon?

Rand asks whether Facebook will build their own search engine eventually? he personally doesn't think so as Facebook would need their own crawling technology and infrastructure and that is not aligned with their business model.

With regards to on-page correlations to ranking factors, these are Rands views:

1. The position of the target keyword at the beginning of the title tag does have an impact on rankings, contrary to what he originally thought. He admits he was wrong. I think it is his candor and approach in admitting mistakes between testing that people appreciate and value.

2. H1s: not that important. However, Rand clarifies that the target keywords embedded in the H1 tag and being placed high up in the page does have a impact on ranking, but not the actual H1 tag.

3. Alt Attributes: embedding keywords here is potentially very useful. This was already covered on the SEOmoz blog and SEOmoz Pro training last October 2009.

4. Keyword density: Rand doesnt think that there is correlation between percentage of keyword density and causation.

Conclusion: judging from Rands presentation, SEO ranking factors for 2010 seemed to be pretty much the same as those for 2009, except for the insights on Facebook and the tip about the reasonable surfer model theory, which I plan to read thoroughly as soon as I get a moment free. Rand always tries to be funny, and tries hard, but he is not good at it. What he is however good at is at presenting facts, at which he actually excels in.

Rob Kerry, Ayima

Rob Kerry from Ayima takes over with, probably, one of the most useful presentations of the entire two-day conference, with findings presumably based on empirical data derived from his portfolios top clients.

The main points he made were:

1. Top takeaway: 301 redirects do not carry as much weight as they used to. He spices up this statement with a top liner on his first slide that reads: Death of the External 301. This means that Google is devaluing this technique, perhaps due to extensive misuse, particularly in affiliate sites

2. When site migrations are necessary, Rob recommends naturally regaining existing inbound links pointing the old site by requesting this from the existing linkerati or by doing additional link building for the new site.

3. Rob points out that January 2009 was the month that Google had a slash on link juice-passing via 301redirected links and that big brands that re-branded or changed their domain name at the end of 2009. These companies will have experience a double decline in traffic: first after the migration while Google re-instated domain authority and a second one in January following Googles slash on authority triggered by 301redirects. Top takeaway here: if you work for a big brand that boasts a complex web structure, stop messing with migrating sites and internal web revamps that tend to throw away the multiple inboud links you may have to those pages: 301 redirects is not the right solution any more, though there still solid usability reasons why you should do it.

4. Third top takeway: it is increasingly becoming more difficult to rank internal pages for competitive terms. Thank you! I have indeed noticed that phenomenon on some of the British Council Teaching English and Learn English websites where we have been losing rankings previously gained by internal pages.

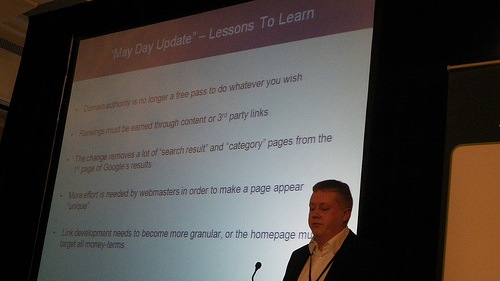

5. Rob Kerry ended his presentation with a slide alluding the May Day update and the lessons to learn from it: internal pages targeting the long tail will be needing more unique content, direct links from the homepage or heavier quality link building to those long tail-targeting deeper pages. Takeaway: shift your term-targeting strategy where possible to make your homepage target the top money-terms and valuable short tail traffic.

If you have not had the chance to read about the May Day Google update, likely effected by Caffeines new algorithms, and the perceived and reported drop in traffic from long tail keywords by webmasters between 28 april to 3rd May, I recommend you read this discussion at webmaster world as well as this whiteboard Friday video on SEOMoz. My takeaway from reading such discussion at webmaster world forum: Long tail traffic in most cases relies on internal link juice.

Mikkel deMib Svendsen, www.demib.com

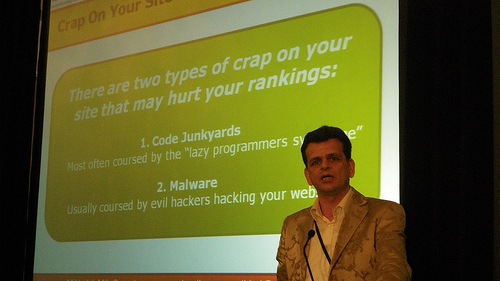

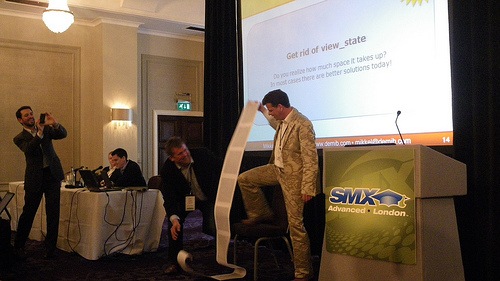

Crap on your site can hurt your rankings thats what Mikkel deMibs first presentation slide read.

DeMib s main points during his presentation were:

- clean up your code: get into the habit of checking your source code as often how malware can be detected

- if Google finds malicious code or funny code on your wesite, it is not going to rank your site simply because he does not wan users to go to your site.

- keep your server software and all applications updated. Check https://secunia.com/ for vulnerabilities in your server and applications sotware

- Size do matter, and small is beautiful! Site speed as always been a raking factor. Throughout his presentation deMib mentions this post about website speed at Google Webmaster Central, and this other one from Yahoo developer on website performance.

- use a content delivery network (CDN) for Images, videos, flash, etc. I personally have a lot to say about CDNs, as we use Akamai at the cultural relations organization where I work and it does help with speeding the delivery on content to users but in terms of geotargeting it causes mayhem via the poorly unassigned IP-targeting capabilities.

- Compress your objects and http response (eg: gzip whenever possible)

- keep all your JavaScript in one external file and all you CSS in one .css external file too

- get rid of all the non-essential comments throughout your code

- remove all empty containers (divs)

- remove all unnecessary meta tags.

- minify or obfuscate code by removing blank space.

- deMib says that the quality of the code is not so important as a ranking factor as 90% of sites do not actually validate. True, but I still like to keep the code validated as it takes me into a kind of best practice way of working and I think it can help you avoid possible issues to do with possible crawlablity obstacles.

At some point deMib picks on .NET systems and point at the lousy length of the source produced by view_state. This can badly harm your website. Takeaway: discuss with your developer whether viewstate is being used in your sites and if it is possible to minimize the amount of viewstate data showing in each page. Read this post on ASP.net viewstate in the Freshegg website for further information on workarounds to this problem.

Q&A for this session:

Rob Kerry mentions makes the point that it is not worth relying solely on domain authority any more.

Takeaway: with regards to canonical redirects being a replacement for 301s, yes this can be a solution worth testing, but it would be much better to try and regain the links pointing to the old site on the new one via traditional methods. Rob states that it is not a coincidence that Google announced the new cross-domain canonical tag last December 2009

Takeway: At this point I asked myself the question whether canonical redirects were passing link-juice and I then heard Rob saying he had experienced link-juice passing in canonical redirects on his sites.

There were some discrepancies as to whether canonical redirects were the right replacement for 301 redirects between DeMib, Rob and Rand, which is actually expected on opinions from recent algorithm changes .

Rand mentions that taking the Query Deserves Freshness algorithm is very important is very important if your business is in the news & media industry.

deMib agrees that it is important to keep your web pages updated with content generated via users, yourself, dynamic elements or even scraping it!

K.M. Hauksson ended the session saying that they will be auctioning the long paper print out that @Demib used to make the point about the ridiculously long source code pages produced by .NET systems using viewstate.

LEVERAGING DIGITAL ASSETS FOR MAXIMUM SEO IMPACT

Rob Sheppard, Ask Jeeves

The session starts with a presentation from Rob Sheppard, Ask Jeeves which covered too much in detail basic aspects of Universal search and why it is important, which in fact is not useful to most of attendees because what most of them wanted was actionable tips they can use to put into practice with their campaigns. SMX

London has been tagged as Advanced and therefore all beginners sessions are skipped, however there was still a fair amount of beginners content.

I inevitably found myself at times during this session before lunch wondering if I should have been on the Amazing New PPC Tactics session, which apparently was packed with actionable advice.

Takeaway from Rob Sheppards presentation: when working on producing and optimizing your digital assets for universal search results, aim to answer user questions about your products and services by reading places like Yahoo Answers.

Ian Strain-Seymour " Bazaarvoice

Ian talked about customer reviews and microformats in the form of the voice of the customer. One of the best points made during his presentation are on:

Finding the weak spot in your competitors and exploit that area, and repeat as many times as possible to gain market share and customer trust.

Universal search presents a unique opportunity to showcase digital assets your company may boasts which your competitors dont. Customer reviews, Q&A, blog posts, comments are all opportunities to display your content. He makes special emphasis on microformats. At this point, Rob Kerry mentioned a good article on microformats on Richards SEOgadgets blog and I tweeted one of the links to Richards posts on microformats, the later I realize I could have tweeted the entire tag page or category page on microformats

Shmulik Wellers replacement, Sunday Sky

At the point of this presentation, Twitter activity on whether this should be and advanced SMX conference was growing. The importance of being present in Universal search was being overstressed, and most attendees already probably knew that it was very important to optimize all digital assets to try and dominate the SERPs.

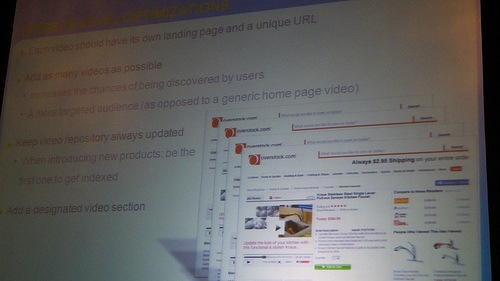

I am not sure I heard from the speaker that people search for videos on the web and therefore writing the keyword video in your video title tag could be a good idea? Someone corrects me if this is wrong please.

It is a very good idea t add as many videos as possible to your website to enhance your chances to be visible on the SERPs.

Takeway: In the Q&A, someone asked whether by achieving video visibility on the SEPRs, one could enhance their chances to rank well on their text results. The speaker feels it is quite likely.

Rob Kerry, Ayima

I waited impatiently for Robs turn as I knew he would have some good pointers to note down to turn into actionable tactics.

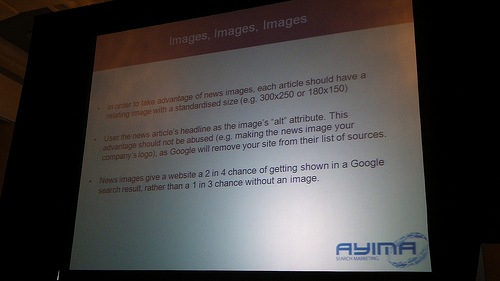

Robs presentation focused on News optimization and Google News. Heres the main learning points I got from this presentation:

1. Presumably clicks via news results in the Google news SERPs can help your overall SEO as Google takes into account the overall No. of clickthroughs to your site.

2. Press releases optimized for short term visibility on the Google News results, albeit short-term, helps you get visibility for your competitive targeted keywords.

3. Try and have the names of different authors, even if they are not real, on your optimised news articles. This will make Google think that you have a team of writers and are serious about writing news content for public distribution.

4. To have the right sized image accompanying your press releases is very important as your chances to get your press release more visible will increase.

5. Takeaway: Every article you want to optimize should have a relating image with standardized size: 300x250 or 180x150

6. Put the news title headline as the alt attribute in the image but without abusing this technique or you will be penalized by Google.

During the Q&A, I got the impression that most of the questions go for Rob Kerry:

It a good idea to do link building on your YouTube hosted videos in order to get them on the SERPs

There were some exchanges and view points about buying user views on your videos, video ratings, and fake Tweets to help you rank or for online reputation management purposes.