Eye tracking by SMI Eye Tracking

Sound interesting isn't it? One of the recent patents filed by Google hints at the search giant's capability of predicting voice queries and processing them semantically simply by interpreting the user's gaze order.

For folks interested in voice search SEO, this patent can provide a lot of insights. We'll simplify the contents described in the patent .

A Look Into Google Voice Search Powered By Knowledge Graph

The Google voice search apps available on Android, iPad, iPhone and Windows 8 accepts voice queries instead of traditional typed in queries. It can speak out the answer loud and clear. But, voice search is still not capable to answer every query. There are many areas where research is required before the user comes to a conclusion. These informational style queries are the most difficult to handle for Google Voice.

The launch of the Hummingbird update in late August 2013 paved the way for new possibilities to understand the intention of the user in spoken or typed queries. An intention algorithm processes the queries using the built in database of user entities known as the Knowledge Graph. This helps identify which identity the user seems to be referring to when a query is submitted. Knowledge Graph not only stores the entities but also identifies a relationship between them. This makes it even easier for Google to suggest options related to the main search query entered by the user.

Now, semantics is getting deeper and has reached a level when the users eyes can be tracked to understand which object the user may be referring to when he enters the voice command.

Difficulty In Processing Voice Queries

With the implementation of the Hummingbird update the quality of results has started to improve for traditional (typed) queries based on semantic analysis of those queries. But the user intent behind voice queries remains to predict.

This is because for typed queries users provide substantial hints by typing keywords or object names. Voice search is done in a more casual, almost minimal manner. This is the reason Google faces difficulty in processing voice queries as compared to the typed queries.

Semantic Interpretation Of Voice Queries Using Gaze Attention Map

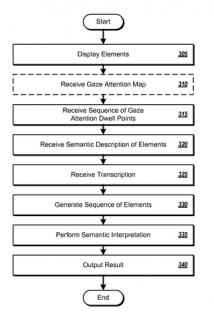

Here comes semantic interpretation of voice queries using a component known as Gaze attention map. This is set to make Hummingbird even more fast and precise. Have a look at the below steps in order to understand how Google exactly uses eye gaze to establish a semantic relationship between the object and the user query.

Step 1- User enters the voice command which gets interpreted by the voice recognition system.

Step 2- The display elements in the app consists of an efficient eye tracking system that captures the users eyes to determine which object the user is looking at. It generates the gaze attention map by identifying and monitoring the gaze points on the device screen.

Step 3- The entire set of gaze attention dwelling points is obtained in proper sequence.

Step 4- A related semantic description is obtained using Meta data.

Step 5- A transcription of the voice command is generated.

Step 6- Proper sequences of elements are created based on the dwelling positions present in the gaze attention map along with their semantic Meta data.

Step 7-A semantic interpretation is performed based on the elements present in the transcript command and matching them with the elements generated in step 6 above. This helps to determine which element the user is referring to in the voice command.

Step 8- The final result is generated.

The chart below explains the set of steps that happens whenever a voice query is entered by the user.

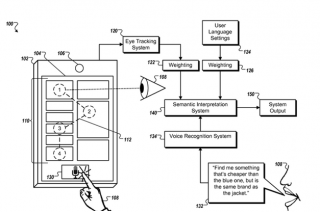

A Real World Example

Suppose your screen displays 3 different color track suits, 2 pair of shoes and 1 jacket. You enter the query in Google Find me something that's cheaper than the blue one, but is the same brand as the jacket. This query can be really confusing for any search engine to process but with the help of gaze interpretation technique, it's made possible.

As a first step, Google receives the voice command entered by the user and generates a gaze attention map consisting of all the points which the user gazed at.

Those elements are then identified and proper semantic Meta data of every object is obtained. In this case, 6 objects are displayed on the screen so 6 different sets of Meta data like price, color, brand name, size etc are obtained.

Then, the object with the longest gaze timing is obtained and matched with the element present in the query. In this case, the blue track suit was seen for a larger duration of time as compared to the other objects. So, Google identifies "the blue one" refers to the track suit and not the shoes which weren't stared at at all. The other object which is the jacket is also identified and relevant Meta data is obtained for both the objects. In this case, the price of the track suit (lets suppose it was priced at $200) and the jacket brand (lets suppose it's Adidas) gets identified.

As a last step, a proper semantic interpretation is performed based on the information obtained so far. Google knows that in the query "Find me something that's cheaper than the blue one, but is the same brand as the jacket" the user is referring to the track suit when he is saying cheaper and to the brand Adidas when the is referred. This makes it easier for Google to process the voice query. Google can easily display Adidas tracksuits that are priced below$200.

And, it happens at such a tremendous speed that you will hardly notice any speed difference in the processing of voice queries.

Voice Search SEO Is Next Generation SEO

Voice search is undoubtedly the next generation SEO and as Google is adapting itself to serve the needs of mobile users, we need to adapt to better present information to the search engines in an effort to properly optimize the website. The above example clearly indicates the importance of Meta data (rich snippets) in a webpage. Are you ready for voice search SEO? Please share your comments below.